I’m a big fan of Jenkins – it’s a great free open source continuous integration server boasting a tonne of features out-the-box and even more through the use of downloadable plugins.

In a recent project I was tasked with writing a little bit of middleware glue to integrate data between two systems. The resulting code is packaged into a single .jar file, containing several different provisioning services which are designed to run independently via cron jobs. I use slf4j wrapped around log4j to handle all application logging. The same log4j configuration file is used by all the services which specifies that output is written to a single log file on disk.

We can set up cron to run these services at regular intervals and this works fine if the services are intended to be left alone for long periods of time, but during beta testing and initial launch we need to carefully monitor the services and check for problems. [Although there are many unit and integration-level tests surrounding this code, the services are primarily wrappers around a set of webservice calls and so relying on web-based communication is always prone to problems (networking issues, server availability, connection time-outs etc)]

I had been using Jenkins to run my tests whenever I had checked code in (I was the only developer so it was the cheapest way to double the programmer effort on this project 😉 so when I came to look at the deployment infrastructure I realised Jenkins could help here as well.

In addition to scheduling jobs much like cron does, Jenkins can also be configured to monitor external jobs which are run by some other means (manually, cron, quarts etc).

To do this, firstly I have a bash script which runs the actual java service

#!/bin/bash

# Example of provision_jobs.sh script

# obtain the current directory this script is running in

SH_DIR="$( cd "$( dirname "${BASH_SOURCE[0]}" )" && pwd )"

# set up other env vars

JAVA_DIR=./java

CONF_DIR=./conf

cd $SH_DIR

job="/usr/bin/java -cp $CONF_DIR:$JAVA_DIR/service.jar path.to.class.Main"

arg=jobs

# run the job and collect the output

runoutput=$($job $arg 2>&1)

# echo output to console so jenkins can pick it up

echo "$runoutput"

# set the script's output status for jenkins

if printf "%sn" "$runoutput" | grep -E 'ERROR|Exception'

then

exit 1

else

exit 0

fi

fi

Next we can configure a wrapper around this script to fire the output at Jenkins

#!/bin/bash

# Call this script as follows:

# >jenkins_wrapper.sh [full_path_to_script] "[Jenkins job name]"

# This script checks if Jenkins is running and if so executes [full_path_to_script]

# sending output to a jenkins monitoring job, identified by the second parameter.

# If jenkins is not running, the job is executed directly

SH="$( cd "$( dirname "${BASH_SOURCE[0]}" )" && pwd )"

export JENKINS_HOME=http://jenkins.server.address:port/jenkins

JOB=$SH/$1 # script to run

LABEL=$2 # jenkins job name

# Path to jenkins library

JENKINS=path/to/jenkins/jenkins-core-*.jar

# check if jenkins is running

if curl -s --head $JENKINS_HOME | grep "HTTP" > /dev/null

then

# Jenkins must be running so run our job through jenkins

/usr/bin/java -jar $JENKINS "$LABEL" $JOB

else

# Something happened to jenkins, run the job anyway

$JOB

fi

Finally, schedule or run your job (via cron in this case)

*/5 9-17 * * 1-5 /path/to/jenkins_wrapper.sh provision_jobs.sh "Provision Jobs" &> /dev/null

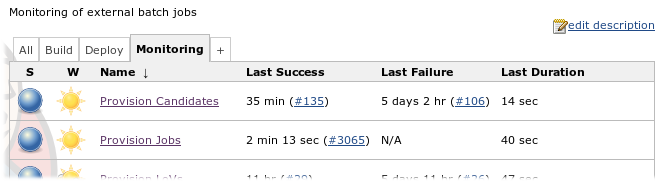

The job will now run as normal, but any output to stdout is also recorded by a specific Jenkins job. So why go through this extra step of outputting the same information from the logs into Jenkins? It seems unnessesary at first, however there are a number of advantages:

- It becomes easy to spot execution failures – just look for that little red balls amongst a bunch of blue ones

- Quickly check execution times – Jenkins reports when the last run was made

- Statistics – Jenkins provides trend reports out the box showing how long the jobs took to run

- Email alerts – people can easily receive automatic email alerts if a job fails for some reason.

- All output can be viewed through the web without intimate knowlege of command line tools such as grep

Those familiar with Jenkins will immediately question why I did not schedule the jobs directly in Jenkins rather then use cron. Although I did consider this approach the main reason not to is that we didn’t want to rely on Jenkins in production. A secondary consideration for us is that it could potentially cause confusion as the development team already used cron for most of their other scheduling jobs.

So I’ve found this monitoring method really useful to get a quick overview of the health of an automated service, however it doesn’t solve all your problems – for instance if you need to hunt around in the logs to answer a specific query (e.g. when was job 123xyz created?) then your still better off grepping the output.

Also be aware that there is currently an issue with the most recent versions of Jenkins which breaks the external monitoring functionality.